OWL2RDFParserDeclarationRequirement

It turns out that the OWL 2 specifications contain a very detailed definition of how an RDF serialization of an OWL 2 ontology should be parsed. I think that for practical purposes this definition is a bit rigid. It is very useful for an OWL 2 parser to be able to handle files that are slightly malformed. A good OWL 2 tool can then write the ontology out in a manner that fixes any problems found during the parse.

In this note, I am going to present the argument that an fully OWL 2 compliant parser should reject the following simple ontology:

<?xml version='1.0' encoding='UTF-8'?>

<!DOCTYPE rdf:RDF [

<!ENTITY owl 'http://www.w3.org/2002/07/owl#'>

<!ENTITY rdf 'http://www.w3.org/1999/02/22-rdf-syntax-ns#'>

]>

<rdf:RDF

xmlns:owl="&owl;"

xmlns:rdf="&rdf;"

>

<owl:Ontology rdf:about="http://example.org#test"/>

<!--

<owl:ObjectProperty rdf:about="http://example.org#r"/>

<owl:Class rdf:about="http://example.org#y"/>

<owl:Class rdf:about="http://example.org#z"/>

-->

<owl:Class rdf:about="http://example.org#x">

<owl:equivalentClass>

<owl:Class>

<owl:intersectionOf rdf:parseType="Collection">

<rdf:Description rdf:about="http://example.org#y"/>

<owl:Restriction>

<owl:onProperty rdf:resource="http://example.org#r"/>

<owl:someValuesFrom rdf:resource="http://example.org#z"/>

</owl:Restriction>

</owl:intersectionOf>

</owl:Class>

</owl:equivalentClass>

</owl:Class>

</rdf:RDF>

The problem with this ontology is that the declarations of the property r and classes y and z are missing (they are commented out). If the declarations of the property, r, and the classes, y and z, are uncommented then the ontology parses with no problem. To simplify the discussion, I will focus on the declaration of the property r.

The OWL 2 specifications contain a fairly comprehensive specification of how OWL 2 ontologies should be serialized to RDF and how RDF should be parsed to be OWL. I will focus on the second specification for the purposes of this note.

Let us say that we have an ontology containing a restriction

<owl:Restriction>

<owl:onProperty rdf:resource="http://example.org#r"/>

<owl:someValuesFrom rdf:resource="http://example.org#z"/>

</owl:Restriction>

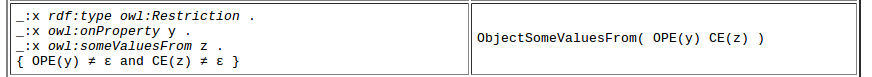

There is a subsection inside of the parser specification that explains how this restriction is supposed to be parsed. Here is a screenshot showing the relevant specification from table 13:

So everything looks good so far but the thing to notice is the OPE(y)\neq\epsilon and the CE(z)\neq\epsilon on the lower left. Essentially these two statements say that y must have an interpretation as an object property expression and z must have an interpretation as a class expression.

So everything looks good so far but the thing to notice is the OPE(y)\neq\epsilon and the CE(z)\neq\epsilon on the lower left. Essentially these two statements say that y must have an interpretation as an object property expression and z must have an interpretation as a class expression.

So - for simplicity - I will now focus on OPE(y) and try to show that y must either be declared as an object property in the RDF file or must be an inverse. To do this I go back to the top of the parser specification and start searching for all instances of OPE to see how it is calculated. I have to search through several hits but there are only a couple of places where it is assigned. The first place is in in the Analyzing Declarations section. Table 9 essentially says that OPE of an IRI is assigned to be the object property with that name if the object property is declared. The way in which the declarations are extracted is defined in the previous section. Thus for OPE of an IRI to be defined there must be an object property declaration for that property.

The second place where OPE is assigned a value is in a section describing how property expressions should be parsed. In table 11 it is stated that OPE(_:x) is assigned to the object inverse of OPE(*:y) when an inverse property assertion appears in the rdf file. Note here again that there is the little OPE(*:y)\neq\epsilon on the lower right hand side of the table entry. If you continue searching forward for OPE you will find many more hits but nothing more that assigns a value to it.

There is a final statement in the argument. At the very end of section 3 there is a statement that says:

At the end of this process, the graph G MUST be empty.

The easiest way to find this sentence is to go to the first appendix and scroll up a small bit. This means that all the triples must be accounted for in the parse. This is actually an important requirement because otherwise after a parse and a save some data will be lost.

In a way this is all very clean and mathematically precise. The parse must succeed all the way from the ground up. It doesn't allow for any inference to determine any missing types of resources. It requires that all the resource have types that are declared in the ontology. It is a bit draconian for a practical parser though. I think that the parser should fill in any holes when it can so that user can see the results of the parse. But the tool should also then write the ontology out with all the holes filled so that it now fits the specification.