Protege4ClientServer

Contents

Introduction

These are some pages under development to document the Protege 4 Server. We are hoping to release an early alpha soon.

This server is in an early alpha stage and it is recommended that you backup critical files often.

What is it?

The Protege OWL Server provides a platform for collaborative editing and version control of a collection of ontologies. The Protege server tracks changes made to its ontologies, enforces an access control policy for its documents and checks for conflicts between its clients. When used with the Protege client, ontology editors can view and modify a shared ontology in parallel. If a editor chooses, the editor can watch changes made by other editors as they occur. To change an ontology, an editor first makes the changes in his local copy of the ontology. When he is happy with his changes, he can commit them making them available to other editors of the ontology. Alternatively, an editor making changes to his local copy can save his copy of the changes and commit them in a later session.

In addition, the Protege OWL Server can be used as something more like a simple version control system. We are developing a set of command line tools that will be able to use a Protege OWL Server to provide such traditional version control services as checkn, checkout, update, commit and history query commands. The Protege 4 client can be used in this manner as well: an ontology editor can choose not to turn on auto-update and make all his updates and commits manually.

Comparison with the Protege 3 Server

There are several differences between the Protege 3 Server and the Protege 4 Server:

- The local copy.

- In Protege 3, when a client connect to the server, any change made to the client is immediately reflected on the server. In Protege 4, in contrast, changes only get propagated to the Protege server when the user commits the change. This allows a user of a Protege client to consider his changes before sending the changes to the server. This is a significant enough concept that we describe it in more detail below.

- Decoupled client-server.

- In Protege 3 when the server goes down or the network is interrupted, the Protege 3 client either freezes or crashes. In contrast, in Protege 4, if the server stops or is inaccessible, the Protege client continues running normally. It is only when some server operation is attempted, such as an update or commit, that the user may become aware that there is a problem communicating with the server.

- Commit granularity.

- In Protege 3, changes are sent to the server as they are made. In Protege 4 a collection of changes are only committed when the user is ready the user is able to add a commit comment describing the nature of the changes.

- Optional automatic update.

- In Protege 3, a user sees edits from other users as they occur. In Protege 4, this is optional. This will allow, for instance, a user to start a reasoner and query the ontology state without worrying that the ontology will change as the query is in progress.

The Local Copy/Sandbox

With the Protege 4 client server, when a user checks an ontology out from the server, he gets a separate copy of the server ontology. The user can then modify this copy in any way that he likes and the changes will not go to the server until the user commits the changes.

In fact this local copy can be saved to disk and then even editted with a different editor than Protege before it is committed to the server. Specifically, a user can

- start protege and load an ontology from the server,

- save the ontology somewhere on the local disk,

- exit protege and edit the ontology with a text editor

- restart protege and open the ontology from disk

- commit the changes which will include the changes made with the text editor.

What happens is that when the file is saved, Protege also saves some files containing the server providing the ontology document, the location of the document on the server and the revision of the ontology document on the server. So if I save an ontology as Thesaurus-redmond.owl in the client.ontologies directory then Protege saves the following files:

- client.ontologies

o Thesaurus-redmond.owl

- .owlserver

o Thesaurus-redmond.owl.history

o Thesaurus-redmond.owl.vontology

The Thesaurus-redmond.owl.vontology contains information that describes the relationship between the ontology in Thesaurus-redmond.owl and the document on the server. The Thesaurus-redmond.owl.history contains a local cache of the history of changes made to the ontology document on the server. It is not required - if it is deleted it will be rebuilt - but it provides significant performance advantages for the client especially in the case where either the network is slow or the ontology is large.

Videos

Here are some videos that I am making to demonstrate server features:

- Protege OWL Client-Server Basics. This video shows how to

- access a server,

- upload an ontology,

- follow changes made by another user with auto-update,

- have an extended session with the server spanning multiple Protege sessions.

- Accessing the Protege OWL Server from the command line. This video shows how to use the command line client to

- browse the Protege OWL server directories and ontologies with the pos-list command.

- upload ontologies to the Protege OWL server (pos-upload).

- checkout ontologies from the Protege OWL server (pos-checkout).

- commit changes back to the Protege OWL server (pos-commit).

- support the use of ontology editing tools other than Protege to edit a shared ontology from the Protege OWL server.

Large ontologies on a slow network

When a large ontology is being uploaded or downloaded from a server on a slow network, it can take a while to transfer all the necessary data. Unfortunately we have not yet determined how to best monitor and report the progress of this operation so the user doing the upload/download will have little indication of the progress. The good news here is that the user only needs to experience this once for the initial upload of the ontology to the server and once for his initial download of the ontology. In addition, since the upload of an ontology is a one time thing, it is very likely that it can be performed on a faster network.

The issue concerns the change history stored on the server representing the set of changes between revision 0 and revision 1. These changes consist of the full set of changes needed to create the initial version of the ontology. Thus for instance, if the NCI Thesaurus is uploaded onto the server, the set of changes to go from revision 0 (the empty ontology) to revision 1 (the initial version of the Thesaurus on the server) will contain over 1.2 million individual changes. This change set is stored in a 300 MB file which then needs to be transfered to any client that wants a copy of the ontology. (In point of fact, this change set gets compressed before it hits the network so the actual data copied across the wire is only about 44 MB.)

Once the ontology is downloaded to the client, the client can save the ontology with the change history to disk for later reference. When the ontology is reloaded from the disk, the client will already have a copy of the 1.2 million changes from revision 0 to revision 1 and will not need to download it again from the server.

Installation details

Prerequisites

For the client installation, the only prerequisite is that you successfully installed Protege. For the server installation you must have installed

- a copy of Oracle's Java (1.6 or 1.7).

Note that windows systems come with there own version of java but that we have had trouble with this version in some situations. The openjdk java on linux does work however.

It is also recommended that you create a user account to run the server. Ideally this user account will have minimal access to the system. It will be used to run the Protege OWL Server. On a linux machine this account can be created with a command like the following:

adduser --system --home /usr/local/protege protege

Client Installation

When the Protege OWL server is released, the latest Protege distribution will include the latest version of the plugins needed to access the Protege OWL Server. In the mean time, to allow Protege to access the server you need to download the following files and copy them to the Protege plugins directory:

- the server library,

- the Protege client plugin and

- the latest owl api (version 3.2.4).

Server Installation

This page describes how to install the Protege OWL server. First download the self-extracting installer. To run the installer, you must run the command

java -jar owl-server-installer.jar

with administrator privileges. This is done in a slightly different way for windows than for the unix based operating systems (os x and linux).

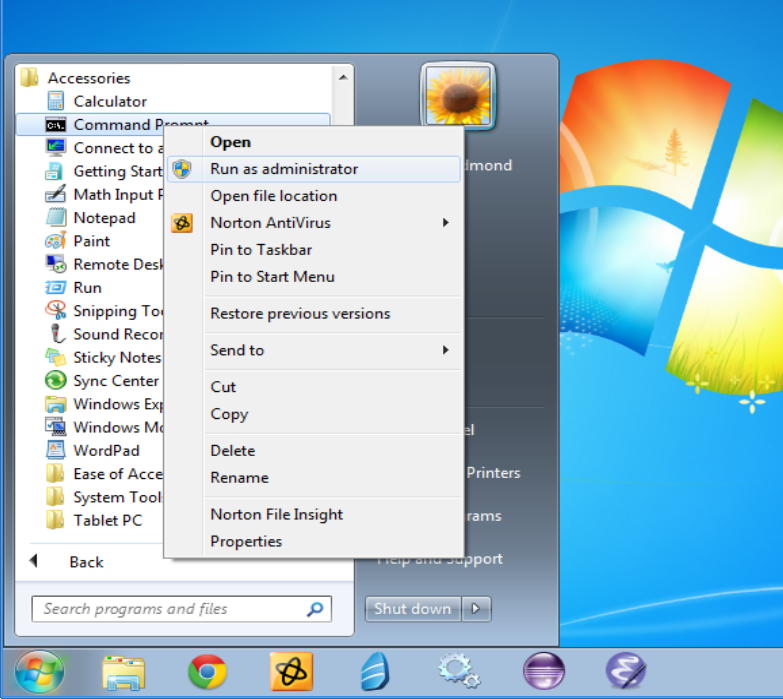

In windows, you create a command line with administrative privileges with the following steps:

- left click on the start button and click on accessories,

- right click on the "Command Prompt" icon and click on "run as adminitrator",

- when windows asks if you want the "Windows Command Processor" to make changes to your computer, answer yes.

You will know when you have done this right because the title bar of the Command Prompt window will say "Administrator: Command Prompt".

Linux users will probably know what to do. If their system uses sudo, the command can be entered as follows:

sudo java -jar owl-server-installer.jar

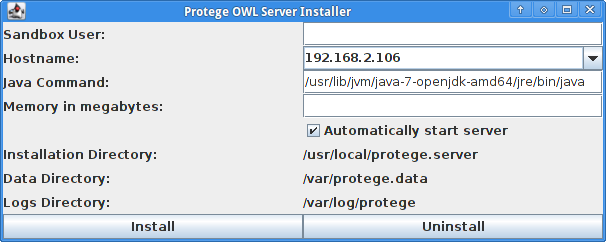

The sudo command will work for mac users also. Alternatively, if the root account has a password associated with it, they can obtain a root prompt with the su command. When you run the installer, you should see a screen such as the following.

There are four fields that need to be filled in (they are not required for the undeploy or the uninstall):

- Sandbox User

- This is a user that ideally does not have any privileges and does not have access to any sensitive files. The Protege OWL server will run under this user id. If the server was to come under a successful attack, the attacker would have gained access only to this account and would still not have gained meaningful access to the system. This is a standard technique for sandboxing a server and is used by some well known servers (e.g. tomcat). Windows users with domains enabled should remember to specify the domain in this user id (e.g. at Stanford my user id would be win\tredmond meaning the user tredmond in the domain win.)

- Hostname

- This is the name of your machine in a form that can be accessed by clients. Generally the suggested hostname will need some sort of editing.

- Java Command

- The java command supplied appears to often be reasonable. It is taken from the java that is being used to run the installer. Windows users want to use the Oracle java rather than the native windows java.

- Memory in megabytes

- The number of megabytes of memory that you want to give to the server.

Once these fields are filled in there are four buttons that can be clicked on:

- Deploy

- This will install the Protege OWL Server, start the Protege OWL Server and arrange that the Protege OWL Server is available after a reboot. This option is not yet available for windows users.

- Install

- This will simply install the Protege OWL Server but not start it. This might be useful for linux or OS X users who want to run the command line utilities. Windows users will have access to a script (in the bin directory of the distribution) that can be used to start the server.

- Undeploy

- This will stop the server and prevent it from starting on a reboot. It will leave the server installed.

- Uninstall

- This will completely uninstall the server. It will leave the data directory intact because this may contain some useful user data.

A detailed description of how the installed protege server is configured on your system can be found here.

Post-Installation

An important configuration file for the running server is the UsersAndGroups file which is located in the "configuration" directory of the Protege OWL Server distribution (/usr/local/protege.server on os x and linux and "C:\Program Files\Protege OWL Server" on windows). This file defines the users and their passwords and will probably need to be modified for real server operations. The server only reads this file on restart so it needs to be restarted if this file is changed.

After the server is deployed, it is probably useful to look in the logs directory. At the end of the install the installer reports the location of the logs directory. For linux and os x installs the logs are located in "/var/log/protege" and for windows the logs are located in "C:\ProgramData\Protege OWL Server\logs". The logs from a successful server start should look something like this:

Sun Jan 13 16:25:27 PST 2013-INFO: Server configuration started. Sun Jan 13 16:25:27 PST 2013-INFO: User id: protege Sun Jan 13 16:25:27 PST 2013-INFO: Java: JVM 1.7.0_09-b30 Memory: 745M Sun Jan 13 16:25:27 PST 2013-INFO: Language: en, Country: US Sun Jan 13 16:25:27 PST 2013-INFO: Framework: Apache Software Foundation (1.5) Sun Jan 13 16:25:27 PST 2013-INFO: OS: linux (3.5.0-21-generic) Sun Jan 13 16:25:27 PST 2013-INFO: Processor: x86-64 Sun Jan 13 16:25:27 PST 2013-INFO: Server configuration found Sun Jan 13 16:25:27 PST 2013-INFO: New server component factory: Basic Conflict Manager Factory Sun Jan 13 16:25:27 PST 2013-INFO: New server component factory: Plugin Infrastructure Management Sun Jan 13 16:25:27 PST 2013-INFO: New server component factory: Policy Components Factory Sun Jan 13 16:25:27 PST 2013-INFO: New server component factory: RMI Transport Factory Sun Jan 13 16:25:27 PST 2013-INFO: New server component factory: Core Server Factory Sun Jan 13 16:25:27 PST 2013-INFO: Server advertised via rmi on port 4875 Sun Jan 13 16:25:27 PST 2013-INFO: Server exported via rmi on port 4875 Sun Jan 13 16:25:27 PST 2013-INFO: Authentication service started Sun Jan 13 16:25:27 PST 2013-INFO: Basic Conflict Management started. Sun Jan 13 16:25:27 PST 2013-INFO: Server started

(The java 6 version provides some extra redundant lines. One of the advantages of java 7 is that it pays attention to the lines that configure the logger in the logging.properties file.) If there are exceptions this early then it is possible that something went wrong and this may need to be debugged on the p4 mailing lists.